Last week, the Credentials Community Group of the World Wide Web Consortium hosted Scott Jones, sharing his company’s work on Client-side Biometric Authentication and Identity Verification. https://www.w3.org/events/meetings/6c106024-7f5f-4297-972b-18af6432aaef/20260203T120000/

He said a lot of smart things about his company, Realeyes https://realeyes.ai/, and their VerifEye offering. They are a leader in using AI and advanced biometrics for identity verification. I appreciated his discussion of how they are using real technology to improve the quality and privacy of identity assurance. In particular, I appreciate the progress towards client-side biometric authentication, which may prove a long term best-of-class approach to securing our digital identities without creating a panopticon.

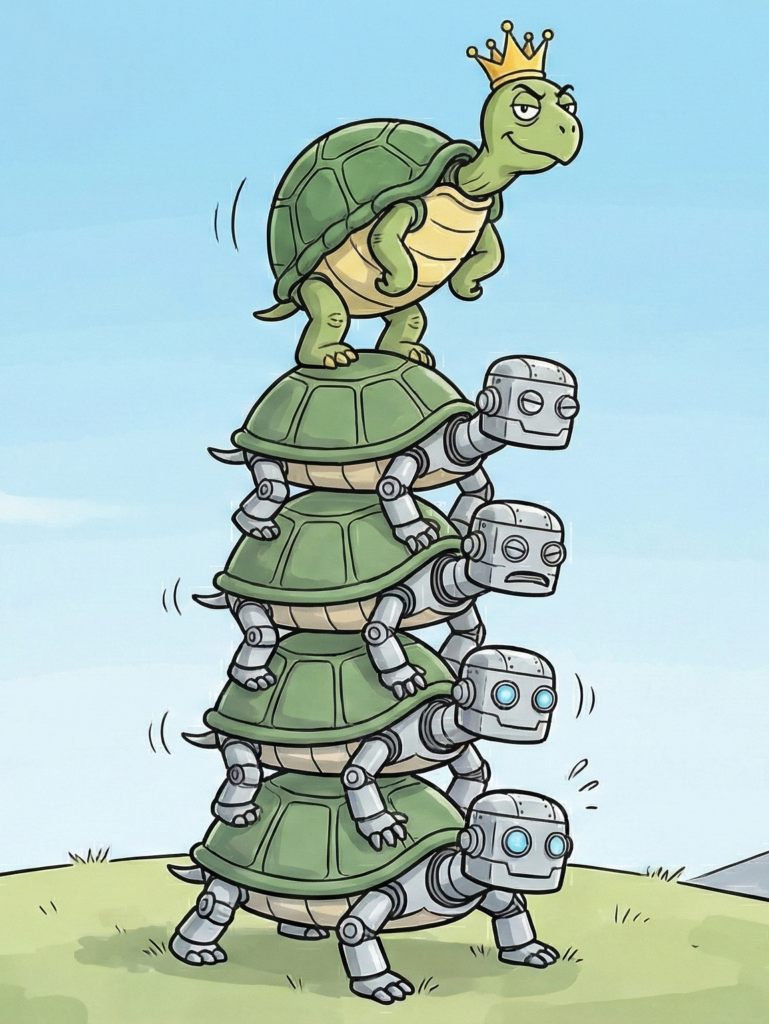

However, there is a fundamental flaw in their approach that deserves attention. Surprisingly, it is one that Dr Seuss’s Yertle the Turtle might have found familiar.

At the end of the day, after all the privacy-engineering on the front end, Realeyes maintains their own uniqueness database. To their credit, they are refreshingly candid about charging for access. They hope to create a global database of who is human and then charge to query that database. It’s a straightforward business model that helps us better understand how such a system might be abused or otherwise cause harm.

This vendor-controlled uniqueness database is the problem.

Worldcoin and World

Realeyes is essentially following the footsteps of World https://world.org, formerly Wordcoin, the brainchild of Sam Altman as he seeks to establish “Universal proof of human, finance and connection for every human.” World is clear in the goal: “secure access to things only humans… should have access to.” The point is to create a list of who is (and implicitly who isn’t) human, specifically for the purposes of refusing services to those deemed less than human. World, of course, couches this in the context of Altman’s fear mongering about AI, but the language is surprisingly straightforward. If you aren’t deemed human by World, you will be denied services.

Both Realeyes and World establish a global uniqueness database draped in the language of privacy. Both have legitimate technical innovations that improve the quality of recognition. Both have privacy innovations that reduce the unnecessary exposure of PII. Unfortunately, both are fundamentally vendor-lockin businesses that, in the pursuit of profit, seek to dehumanize at scale. Both are playing from the same playbook, overpromising privacy benefits through buzzword bingo to justify building out a global database of humanity.

At the end of the day, they each control the set of humans in their uniqueness database. Only they can audit that database. Only they can correct errors in that database. And only they control the use of that dataset in other contexts, e.g., only allowing those who have signed up for their program to access certain services. Neither are open systems; both are clearly and unambiguously a mechanism for building a proprietary database they charge per transaction to query.

Global Uniqueness is the Problem, not the Goal

The notion of global uniqueness makes sense naively, but when considered more thoroughly, it’s a mirage that leads good people to build bad systems.

I have had multiple conversations with World and discussions with hundreds, perhaps thousands of people at the many identity conferences I’ve attended over the last decade, including the Internet Identity Workshop and the European Identity and Cloud Conference. I’m also a author, participant, and leader in the Rebooting the Web of Trust writing workshop and I’m the use case editor at the World Wide Web Consortium for both Decentralized Identifiers (DIDs) and Verifiable Credentials (VCs). In short, I’ve been exploring, curating, and documenting decentralized identity use cases for over a decade, and I have yet to find one that justifies a single universal database to uniquely identify every human on the planet for all time.

World argues that Universal Basic Income is that use case. A single database that can keep track of everyone, to monitor who received their share payment in this cycle. Seems legit at first glance. But no UBI has ever been truly global, nor is a single payment-per-person-ever the payment strategy for “income”. What actually happens is that a select group of beneficiaries, chosen by funders, receive regular payments for a limited time. That’s a stark contrast to the aspirations of Realeyes and World, which identifies everyone on the planet uniquely across all time.

The scope of uniqueness for UBI, even as imagined, is, in practice, limited in geography, humanity, and time.

- No solution will reach everywhere on the planet. Some jurisdictions will not tolerate this technology.

- No solution will include all people. There will be people who refuse to or can’t participate. People whose religious beliefs or physical disability preclude participating.

- No solution can track humans for all time. What is needed is tracking against a timeframe and cohort, e.g., membership or geography.

To make matters worse, automated solutions simply can’t handle death or other life events without additional public infrastructure based on either trusted authorities asserting marriage, births, and deaths or a mass surveillance system that observes these events for automated assessment and programmatic attestation.

One of the biggest problems in vital records is the erroneous perception by the bureaucracy of supposedly living constituents that are, in fact, dead. See UCL’s Ignoble Prize-winning research on blue zones. A global uniqueness database can’t, as a database, stay up-to-date without monitoring the real-world, and we really don’t want a global surveillance system just to maintain a database of who is human or not. I also don’t want a database where any particular nation-state or corporation can declare me non-human. I live where I live; why track me globally? I don’t want to be in some database that is accessible in any way to [insert name of your favorite geopolitical enemy]. What I want is to be able to voluntarily choose which digital systems I participate in, run by organizations I trust.

What is needed for UBI, insurance claims, digital voting, or any other actual legitimate use of unique personhood is the assurance that, for a limited period of time, within a given population, that a specific individual receives a restricted benefit no more than once. That isn’t helped by a global database of who is or isn’t human. It’s a bizarre non-sequitur to claim that it is.

For example, in UBI experiments in California payments were made to specific individuals over a limited period of time, e.g., $500/month for 24 months. No global database would determine who is or isn’t in that set of limited individuals. No global database would keep track of whom has been paid by that UBI program. Any solution that keeps those details in production longer than the limited time period of the UBI allowance is retaining personal information beyond its intended use. Rather than a system that is checked once to establish a permanent identifier for everyone for all time, functioning UBI systems need to track authorized distributions, for a limited time, to a limited population. A global uniqueness database doesn’t help do that; it increases complexity and introduces an outside party whose interests may or may not be aligned, without actually achieving its claimed goals.

It’s the Locality That Matters

It’s been suggested that “just about any solution is going to involve a database that is under the control of some party”. This also makes intuitive sense, as databases are where we keep track of data at scale. But what we don’t need is a global database of who qualifies as human. In fact what we need are local databases to keep track of the events and people that matter to them.

These contextual databases are both necessary and can be constrained to ensure the appropriate privacy boundaries are respected. A database that any individual or organization asserts as definitive for everyone on the planet, is literally an attempt to centralize identification and control of our very humanity.

In contrast, any decision-making entity (including humans and organizations) will have good reasons to maintain a database of the individuals it is in the job of keeping track of. For example, the American Medical Association (AMA) maintains a database of its members.

But what the AMA doesn’t do is attempt to collect all of humanity into a single computational context. It does not attempt to create a global system where they alone get to decide who is human. They are creating a local system that does what they deem appropriate for their members’ needs.

Context collapse is at the heart of many, if not most, privacy harms created by centralized information systems. Global uniqueness, as envisioned by Realeyes and World, forces a global context collapse for all humanity for all time.

The fact is, we have NEVER had a singular information system that addresses all of humanity.

Period.

And we don’t want one. We really don’t.

Reality itself can’t even maintain a real-time global information context thanks to the speed of light. Even time can’t be treated as a universal. It flows faster and slower based on altitude and speed. It’s crazy. Race conditions for settling global ordering means that even the best distributed system invented (bitcoin) only has probabilistic, historic commitments to truth. Even bitcoin can’t agree on which block is “at the tip” because that’s just not how it works.

We have only ever worked in isolated compute contexts dealing with individual perspectives and domains. Initially that was human cognition, then we built out institutional cognition with bureaucracy. Each bureaucracy is, necessarily, a construct and result of its own information architecture. Any bureaucracy that is attempting to intercede for all humans in all contexts is a misguided attempt to establish a control structure where that bureaucracy’s rules, beliefs, and values are imposed on everyone, typically placing that bureaucracy in a position to extract rents without delivering commensurate value. There are good reasons for different people to have different beliefs and values and I find it unethical to impose the beliefs and values of any subset on everyone else.

So… I don’t support any global set of supposed “truth” that is under the control of any single entity. And what is a more essential truth than whether or not someone is human?

Keep Humanity Human

I’m all for client-side biometrics as both World and Realeyes offer. What I’m not for is centralized lists of who is, and who is not, human. Any “uniqueness” database that isn’t specific to a jurisdiction, a community, or an initiative is an attempt to do just that: create a definitive list of who qualifies as human. Such a list of “unique” humans, used to restrict services to non-humans, will inevitably and erroneously restrict services to actual humans not on the list. In many cases, that means a loss of liberty, dignity, and basic human essentials.

If you want to keep track of who is or isn’t (a) subject to a jurisdiction, (b) a member of a community, or (c) a legitimate participant in a particular project, that’s a legitimate list of people of interest. Different processes maintain different lists for different organizations. That’s how society organizes itself. Done well, you get a decentralized tapestry of different jurisdictions, communities, and projects, that can all keep track of their participants without interference from centralized parties. This is literally how the global world order is maintained, today. By different entities taking care of their own business in their own way.

But what Worldcoin and Realeyes are banking their business model on is creating the ONE uniqueness database for everything, which they conveniently charge a fee to query. And if they succeed–when these uniqueness databases become the gatekeeper to public and private services–then those who can’t or won’t participate in their system will be treated as less than human, unable to participate as full members of our increasingly digitized society.

In contrast, what we are building at the Digital Fiduciary Initiative https://digitalfiduciary.org puts a human in the loop for identity verification, in a privacy-preserving yet auditable way that can be contextualized to the highest granularity. Any individual, organization, or cross-organizational initiative is free to manage their own list of participants with robust identity assurance and rigorous authentication, verification, and validation as those participants engage digitally. Humans determine who is human, not algorithms and definitely not databases listing all acceptable humans.

Eugenics, Exclusion, and Dehumanization

While many who advocate for global uniqueness databases are likely unaware of the ideological foundations of the approach, it is fundamentally an exclusionary and racist solution in the long tradition of eugenics. Those who advocate for eugenics argue that humanity deserves to be intentionally improved by accelerating births of those deemed fit and restricting the role of the “unfit” in society. If you don’t meet the criteria of goodness, you are less than human and your genes should be removed from the species. These criteria typically exclude the poor, disabled, and minorities using pseudo-science to justify who qualifies as worthy of human consideration, and who are treated as animals. https://en.wikipedia.org/wiki/The_Mismeasure_of_Man

The problem with proof of humanity, as imagined by Realeyes and World, is that my humanity is not subject to the judgment of any single entity. No nation-state. No corporation. No human. No one has the right, nor the authority to declare that I, Joe Andrieu, am not human. A system designed to separate humans from non-humans purely from placement on a list is a tool perfectly designed for enforcing nationalist, racist exclusion that treats those outside of the ruling class as less than human. And declaring certain classes of people as less than human is the hallmark of racist and eugenic movements.

On the other hand, every organization has a right to decide–on their own judgment–how they want to treat me.

That is what we do have the right to do: decide how we are going to treat others. We might treat people differently based on where they are from, how old they are, or what positions they may be selected for, but treating people differently because some vendor decides they don’t pass muster as a human is setting up society to defer our most fundamental judgment to an unaccountable intermediary. Should a nation-state decide that they refuse to treat me in a particular way, that’s within their domain. What they shouldn’t do is rely on the unaccountable, unauditable, uncorrectable proprietary systems like those offered by Realeyes and World.

The Fundamental Unknowability of Particular Humanity

Compounding the moral hazards of a global database is the fundamental unknowability of the human person on the other side of a digitally intermediated interaction. While we can build these systems, populate these databases, and restrict access to services based on who appears to be in some database or not, we cannot know for certain if the party we think we are interacting with has given their authentication means to someone else: such as when we hand someone our phone after activating it with a PIN or biometric.

To the phone, the current user is the authorized user, and to the extent that the phone owner did, in fact, authorize someone else to use the phone, that secondary user is authorized to use the phone, but they are not the unique person the phone imagines it to be. Any further interactions through the phone, relying on that confidence, will inevitably be in error.

This is a well known, but rarely discussed problem in digital identity. People regularly share passwords for convenience and expressions of intimacy. We let people sit at our desktop, while we are logged in to supposedly secure accounts. We hand people our phone unlocked and “authenticated”, giving full access to a range of capabilities as if they were the authorized party, even when that was never intended. Sharing our digital insurance card with the police officer during a routine traffic stop can give unintended access not just to content on the phone, but to actually act as the phone owner through that device. It is known that this is a common behavior, but because we don’t have good ways to stop it, digital identity engineers typically ignore it to address problems we have approaches to solve.

Unless we physically observe the person in question, it is impossible to tell if that digital interaction is actually being driven by that particular person. Yes, you can add checks. Liveness detection is a good one. Time-limited authentication challenges is another. Proof of use of secret cryptographic information is a good and rigorous filter. But all of these are ways to increase confidence in the identity of the subject, not a way to guarantee it. Every single technique might be defeated, enabling an attacker to act as the subject with impunity.

The confidenceMethod approach of the W3C Verifiable Credential community, currently under development, has set out to address precisely this problem, giving credential issuers additional ways to specify how the verifier of a given VC can increase their confidence that the current presenter has an appropriate relationship to the subjects in the credential. While we cannot know for sure who is on the other side of a digital interaction, we can use various techniques to increase our confidence that they are.

Agents & Humanity Online

Even if we build out these databases to their highest ambition, with World or Realeyes actually establishing a coherent system used by everyone on the planet, we still cannot guarantee that the alleged person on the other side isn’t an AI. And yet, that’s a fundamental promise of World and an implied expectation for Realeyes.

The fact is, people never directly interact with the digital world. Mediated through sensors like cameras and keyboards, all digital data is subject to the errors of its sensors. I, as Joe Andrieu, never actually make a GET request to an HTTP endpoint; that’s what my browser does for me. It is literally impossible for a standard webserver to process any direct human action. All it can do is respond to signals coming in over the wire. Conceptually, we consider the browser a “user-agent” meaning that we believe it is currently operating under the direct guidance of a human user, as an authentic agent, realizing the user’s will based on gestures made in the browser itself.

Any given HTTP request might be generated by a bot. Even within the browser, any extension or web page can trigger HTTP requests without the user realizing it. When these actions violate user expectations, it’s considered an attack, but at the core of the digital world is digits transmitted over wires. Those digits are subject to attack at the source, even if we secure them in transit. It is effectively impossible, today, to restrict colluding remote users from allowing someone else to use technology intended for them alone.

Delegation to Digital Agents is Inevitable

The fact is, we, as humans, are going to delegate our digital authority to software acting on our behalf. To the extent that their actions are well-behaved, meaning they cost no more than normal human activity, I believe those agents should be allowed to carry out the tasks I ask of it. No amount of remote attestation will prevent a person from giving an AI control over their digital interactions. If that means giving agents access to our cryptographic keys so they can impersonate us, people will do that. So called “proof-of-control” or “proof-of-use” challenge-response techniques create a mathematical guarantee that the current user has use of cryptographic secrets we expect the user to keep secret, but that is not the same guarantee. There simply is no known way to cryptographically guarantee that the current user is the user we expect, no matter what kind of “holder binding” techniques you try.

Online interactions go from compute device to compute device across the network. Given current Internet architecture, we can always redirect the authentication to a proxy controlled by a colluding subject. Always. Which makes it essentially impossible to stop collusionary compromises where the data subject willingly gives their authentication capability or their authenticated device to another person.

What we can do instead is use cryptography to explicitly delegate authorizations of limited scope to agents operating on our behalf, whether they are a bot or not. What we can do is ensure that the digital transmission received by an alleged specific user, has a cryptographic proof that it is acting on behalf of that user. Yes, this takes infrastructure we haven’t built yet that connects cryptographic actions to privacy-preserving in-person proof-of-humanity ceremonies, but it is at least technically possible. IMO, that’s the real solution: create privacy-preserving in-person proof-of-humanity ceremonies that generate credentials that can be used as the root identity for delegations to automated systems. In other words, instead of trying to detect AI, enable affirmative delegation by humans such that whatever software we authorize can act–and be regarded as acting–on our behalf while avoiding spam-bots and overzealous web crawlers. Digital Fiduciaries can help.

Global Universal Identification Is Overkill

For some things, you don’t need identification. The Red Cross famously doesn’t care if your identification documents were burned in your house fire. They will help you reestablish your life, giving you vouchers that get you into motels and gift certificates you can use to buy clothes and they don’t need to see your government ID. Their confidence is met by evaluating a real emergency and interacting with the real people affected by it, including law enforcement and first responders.

For other things, even a RealID driver’s license is insufficient. If you want to fly a plane, launch a missile, or access secure facilities, additional confidence is required. Some facilities require biometric identification. Some don’t. Some require unique PINs coupled with unique digital cards. The fact is, for any given use case, secure systems are tailored to establish just the right level of oversight and assurance. In no use case do we see a legitimate need for a global human database.

We see the honest value is in contextualized, robust identification that combines digitally defensible mechanisms (e.g., encryption, signing, proof-of-use) with real-world, in-person identity assurance to enable identity-responsive services without reliance on centralized notions of who is or is not a human. We also see the danger of building a global database far outstripping any value it might create. The real effect of these systems of global uniqueness will be to reduce the humanity of those who aren’t part of the club. That’s simply not acceptable in a free society and it certainly is not acceptable as a global imposition by any individual or organization.

It’s Turtles all the Way Down

On a lighter note, as I wrote this, I realized that the tireless attempts of the naive to build a single digital perspective on everyone in the world is a bit of a Yertle the Turtle problem. The only way to win is not to play that game.

Yertle, King of the pond, famously demanded he stand on the backs of all the turtles he could find so that he could see all that he commands, expanding his kingdom over everything he sees. He foolishly believed that if he could just see a little bit more–by making his subjects stand on top of each other’s backs–he increased his kingdom, only to find that no amount of turtles could reach a height that would bring the Moon under his domain.

Digital Yertles imagine something similar: if only we could see everything in our domain, our rule will be glorious!

If only we could identify everyone, including those who should not be part of our efforts, then we can finally build a system that appropriately works for every individual.

It’s a slippery slope that none of us wants.

If only we could see all the activity in our domain, then we can ensure all illegal activity is punished.

If only we could track everything everyone does anywhere, then we can finally prevent these pesky crimes [insert favorite fear-based rallying cry] before they even happen.

Imagining an “ideal information system” that tracks everyone on the planet is as shortsighted and ineffective as Yertle’s pile of turtles, as impractical and cruel as Bentham’s panopticon, and as dangerous and insidious as Orwell’s Big Brother.

In short, that way lies surveillance madness.

We can do better.