If you’re going to bet your company on a platform, pick the open one.

That was my advice last month at the Caltech/MIT Enterprise Forum on Platforms. It turned into a lively debate (you can check out the audio for the June 8 2008 event), almost inevitably pitting me against Peter Coffee of Salesforce.com, with Marc Canter rallying the forces of small business and openness in his inimitable style: caustic, irreverent, and sourcing no small amount of passion.

One small side effect of the debate was that, at times, it slipped unavoidably into a referendum on Salesforce.com rather than an insightful discussion of the merits of open verses closed systems and how companies can reasonably navigate their choices. Pulling back from that focus on Salesforce left more than a few unanswered issues. Peter Coffee contacted me after the event and asked to keep the conversation going, which sounds like a great idea.

So, here’s a reprise of my presentation on June 14.

Open Platforms and Standards

Level 4 Platforms FTW

This article is about open platforms and what an entrepreneur should think about when choosing a platform for their business.

Two Questions

When choosing a platform, you’ll want to consider two major questions:

- Will it do what you need?

- Will it last long enough for you to do it?

The first is a matter of features and functionality, and must be evaluated on a case-by-case business for each business. Since I don’t know your business, I won’t spend much more time on this question.

The second question is about longevity. Will the platform itself be available as long as your business needs to use it? Will it be stable and robust enough on a moment-to-moment basis? Is there a self-sustaining community of developers and integrators to help you continue to help your company adjust as business needs change over time? In other words, will the platform continue to be able to provide value for your business in the long term?

What it means to be Open

In talking about open platforms, I want to be clear what I mean by open. An open platform, for the sake of this article (and as far as I’m concerned, for the purposes of understanding the revolution of open systems), is one that adheres to the principles of N.E.A:

- No one owns it

- Everyone can use it

- Anyone can improve it

If a single entity or group owns the platform, it isn’t open. If there are barriers preventing users from accessing or developing on the platform, it isn’t open. If you can’t, with reasonable effort, improve the platform itself, it isn’t open.

Every platform is open to some degree. After all, that is the point: platforms open proprietary systems so that third-party developers can innovate and create value beyond the scope, resources, or expertise of the platform creators. But truly open platforms allow anyone to improve the actual platform, not just develop within its constraints. For example, SSL, the secure sockets layer used to secure web access, was developed in the early days of the world wide web when a small group of innovators figured out a way to automatically encrypt information transmitted between a web browser and a website. They didn’t need to negotiate a contract or get permission, they simply implemented their solution and made it available to everyone, changing the platform of the World Wide Web itself.

When you bet on a closed platform, you are betting that the future of your company fits into the future as designed by the platform owner. When betting on an open platform, you bet that someone, somewhere, will be able to evolve the platform to meet your continuing needs.

NEA is a concept from World of Ends. What the Internet Is and How to Stop Mistaking It for Something Else by Doc Searls and David Weinberger, written October 2003.

Marc Andreessen’s Three Platforms

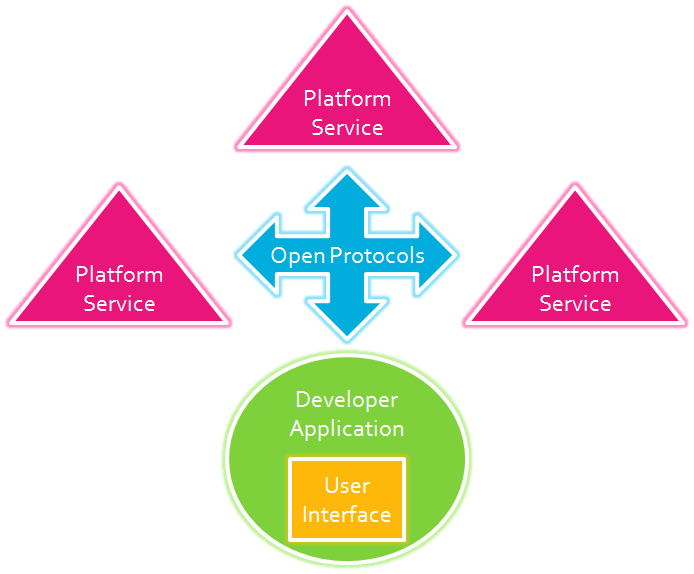

In September 2007, Marc Andreessen, one of the enablers of the World Wide Web, wrote an article titled the The Three Platforms You Meet On the Internet. I’m going to revisit those platforms visually, and introduce a fourth kind of platform that Marc missed. Because the text may be hard to read, here’s a legend of the elements present in all four graphics.

User Interface

User Interface

Developer Application

Developer Application

Platform Enabler

Platform Enabler

Platform Service Provider

Platform Service Provider

With that, let’s look at Marc’s three levels of platforms.

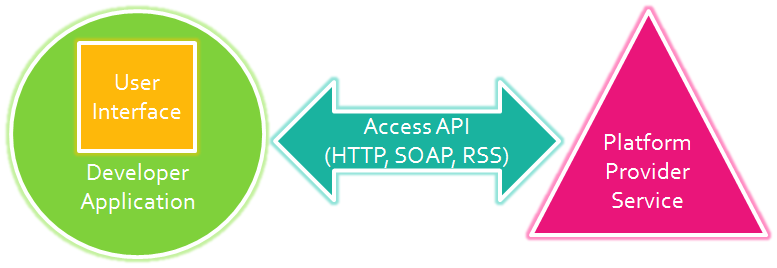

Level 1 Access API

Level 1 Platforms allow third party developers to access the platform via a well-defined and documented API, typically using HTTP or SOAP. This allows developers to create their own applications, running anywhere the developer chooses, with access to the data and services running on the platform. Twitter, eBay, PayPal, Flickr, and Del.icio.us all support this type of interaction.

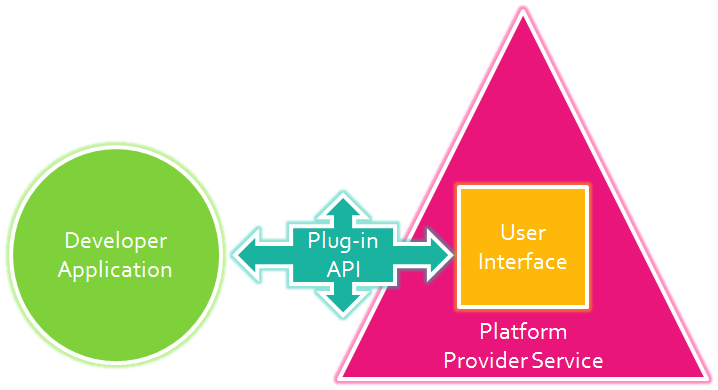

Level 2 Plug-in API

Level 2 Platforms allow third party developers to create their application anywhere, with specific, limited ways to affect the user interface running on the platform. The key shift here is that the platform provider controls the overall user experience, but allows the developer a way to create value within their interface. Photoshop, FaceBook, Firefox, Internet Explorer, and Outlook are all applications which act as Level 2 platforms.

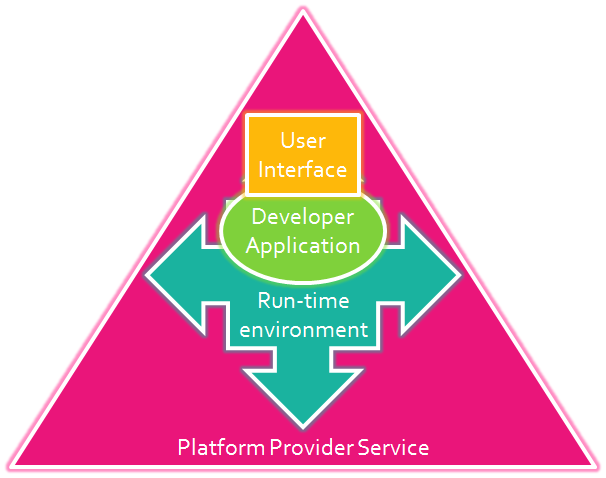

Level 3 Run-time Environment

Level 3 Platforms let develpers create applications that actually run on the platform. That means the code written by third-parties executes directly in the platform context. The overall user experience is still controlled by the platform, but compared to Level 2 platforms, Level 3 applications typically customize the user interface more extensively. Salesforce, Ning, OpenSocial, Windows, Java, EC2, and Google Apps are all Level 3 platforms.

Common Assumption

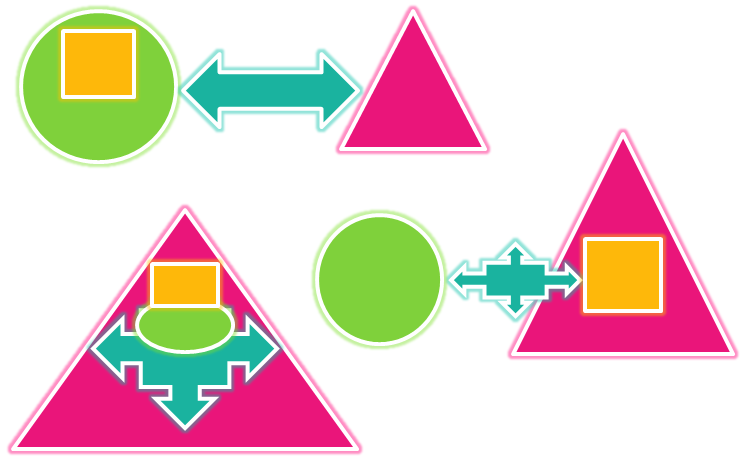

All three levels share a common assumption. You might notice it if you consider the three levels together:

All three levels assume a single platform service provider: Just one pink triangle. Of course, we can assume the number of developers is unlimited… that’s the point of a platform. However, all of the systems described above are built to enable access to one single platform, a platform owned and controlled by its creator.

When Marc wrote his article, I emailed him and asked “What about the World Wide Web?” It doesn’t fit any of his models, yet is clearly one of the worlds most widespread, most successful, and most relevant platforms in history. On the World Wide Web, there is no central server, no platform provider. It is a different animal altogether. I call it a Level 4 Platform.

Level 4 Open Protocols

Level 4 platforms allow developers to build applications anywhere–on a website, on your desktop, even on your cell phone–and those applications can talk to any number of platform providers without restriction, using standard open protocols. Many of us have heard of the most successful protocols: SMTP, POP, HTTP, HTML, TCP/IP, RSS, but most users know these by the applications they enable: email, the World Wide Web, the Internet, blogs.

Level 4 platforms are truly open, even as each piece of technology is provided by separate for-profit companies. It wasn’t until the World Wide Web opened online services to literally any company with a modicum of technical capability that the world began to enjoy the power of ubiquitous interactive services. As long as CompuServe and AOL and AT&T controlled tightly integrated one-stop-shop subscription services, the number of third-party developers was inherently limited. To launch a new service on AOL, you had to negotiate your own contract, all the while knowing that AOL could already be working on something similar and would never allow you to compete directly.

The web changed all that, allowing literally thousands upon thousands of entrepreneurs to explore their own (perhaps crazy) ideas of how to create value for people. No longer limited or burdened by a central platform provider, the pace of innovation exploded. Certainly, most of those ideas were crazy, but we couldn’t have known which were which without trying them first. The open nature of the web as a platform allowed exactly that.

The whole point of a platform is to encourage third party developers, so that everyone gets more value–value which is inherently beyond the purview of its creators. As long as a platform is closed to some degree, it is limiting the possibility for innovation. Limiting the innovation limits value to users. And limiting value to users limits the success of the platform.

Choose Open When Possible

The only guarantee for longevity is if you have options.

- Multiple Service Providers allow you to switch if you need to. You never know if your provider will continue to meet your needs. They may even start to compete with you. The freedom to move to another standards-compliant provider gives you control. Just like you have over your web hosting company and email provider.

- Source Code allows you, or developers working on your behalf, to improve, fix, simply maintain the platform your business depends on. If an open platform breaks, source code access keeps you from depending on the platform provider for a fix. Just like you can with Apache, Linux, Joomla, or Drupal.

- Intellectual Property Use Rights assure users and third-party developers that you are free from fear about changing licensing terms and future lawsuits.

You can’t always choose an open platform. Sometimes it is worth it to develop on a proprietary platform because it helps you get to market faster, with more features. Depending on your needs, you might be better off building on a closed platform. But, if you can, I recommend choosing an open platform whenever possible.

Note on VRM

In case folks are wondering why I am talking about Level 4 Platforms, it is because that is precisely what we are working to build over at Project VRM, where I am the Chair of the Standards Committee.

VRM is the conceptual reciprocal of CRM, Customer Relationship Management. Instead of large-scale enterprise software that helps big companies extract more profit from every customer, VRM is about tools for individuals to create more value in their relationships with vendors.

- Our mission is to enable both buyers and sellers to build mutually beneficial relationships, where everyone benefits from the zero-distance network that is the Internet.

- Our approach is open standards and open source development.

- Our goal is a level 4 platform of the kind described above for user-driven online commerce.

If you can make it, you are welcome to join us at the 2008 VRM Workshop next week at Havard’s Berkman Center for Internet and Society.

Bonus Link: Michael Cote on Platforms As A Service and Lock-in with Force.com

When advertising starts with the advertiser, it inherently wastes money, as it inevitably buys placement in ineffective or misaligned media. By now it is an old chestnut that advertisers waste half their budget–they just don’t know which half. Sometimes advertising is an investment in exploring potential markets… the goal is the data gained in the test marketing, which isn’t entirely a waste. Other times advertising is educational outreach where the goal isn’t so much to trigger a sale, but instead to introduce people to new products and services. Sometimes this is called demand generation. And that still leaves a vast amount of waste, buying media (offline or online) that just doesn’t perform or create any value. The potential savings in these areas is not only missing from Rubel’s analysis, I’d wager it is far more than $1 billion.

When advertising starts with the advertiser, it inherently wastes money, as it inevitably buys placement in ineffective or misaligned media. By now it is an old chestnut that advertisers waste half their budget–they just don’t know which half. Sometimes advertising is an investment in exploring potential markets… the goal is the data gained in the test marketing, which isn’t entirely a waste. Other times advertising is educational outreach where the goal isn’t so much to trigger a sale, but instead to introduce people to new products and services. Sometimes this is called demand generation. And that still leaves a vast amount of waste, buying media (offline or online) that just doesn’t perform or create any value. The potential savings in these areas is not only missing from Rubel’s analysis, I’d wager it is far more than $1 billion.

The huge potential of VRM is to turn these models inside-out, by providing a scalable pipeline directly into the product development and sales divisions of capable firms. Instead of Vendors guessing what people want, VRM services can cost-effectively tell Vendors what people truly do want. If the product is available, the sales team can enable purchase and delivery. If the product doesn’t exist, the Vendor can create it if demand is sufficient.

The huge potential of VRM is to turn these models inside-out, by providing a scalable pipeline directly into the product development and sales divisions of capable firms. Instead of Vendors guessing what people want, VRM services can cost-effectively tell Vendors what people truly do want. If the product is available, the sales team can enable purchase and delivery. If the product doesn’t exist, the Vendor can create it if demand is sufficient. This guesswork and wasted advertising is probably closer to $100 billion/year, but that’s just my gut feeling. And that number only addresses the loss side of the equation, that is, the money we save by not wasting product development and advertising dollars. It ignores the value of products and services that today languish as innumerable missed opportunities–missed because companies have no way to efficiently gauge true market demand. There are undoubtedly services and products that exist–or could be profitably offered today–which fail to reach customers because we don’t have a suitable mechanism for connecting the right customers with the right companies. This potential to close the gap between potential sales and unmet demand, is simply too large to estimate.

This guesswork and wasted advertising is probably closer to $100 billion/year, but that’s just my gut feeling. And that number only addresses the loss side of the equation, that is, the money we save by not wasting product development and advertising dollars. It ignores the value of products and services that today languish as innumerable missed opportunities–missed because companies have no way to efficiently gauge true market demand. There are undoubtedly services and products that exist–or could be profitably offered today–which fail to reach customers because we don’t have a suitable mechanism for connecting the right customers with the right companies. This potential to close the gap between potential sales and unmet demand, is simply too large to estimate. At the end of the day, interoperability requires either standards or one-to-one interoperability engineering. The user-centric Identity movement has grown like crazy in the last few years largely because a hybrid of these approaches have been used, as

At the end of the day, interoperability requires either standards or one-to-one interoperability engineering. The user-centric Identity movement has grown like crazy in the last few years largely because a hybrid of these approaches have been used, as  That’s the idea behind the

That’s the idea behind the