The question of who owns our data on the Internet is a challenging problem. It can also be a red herring, distracting us from building the next generation of online services.

The term “ownership” simply brings too much baggage from the physical world, suggesting a win-lose, us-verses-them mentality that retards the development of rich, powerful services based on shared information.

The term “ownership” simply brings too much baggage from the physical world, suggesting a win-lose, us-verses-them mentality that retards the development of rich, powerful services based on shared information.

Anyone up for sacred cow cheeseburgers?

I’m a member–and a big fan–of Steve Holcombe‘s “Data Ownership in the Cloud” LinkedIn group and I love the efforts of the Dataportability guys and am a big supporter of the Privacy and Public Policy work group at Kantara. There is a lot of good work being done by folks trying to figure out how to give people greater control over the use of data about them (privacy) and gain access to data they use or created (dataportability).

Unfortunately, sometimes the arguments behind these efforts are based on who owns–or who should own–the data. This is not just an intellectual debate or political rallying call, it often undermines our common efforts to build a better system.

Consider this:

- Privacy as secrecy is dead

- Data sharing is data copying

- Transaction data has dual ownership

- Yours, mine, & ours: Reality is complicated

- Taking back ownership is confrontational

Privacy as secrecy is dead

First, the data is pretty much already out there. The issue isn’t “How do we keep data from bad people,” it’s “How do we keep people from doing bad things with data?” DRM and crypto and related technology as the sole means to prevent data leakage and data abuse are failures. Sooner or later, the bad guys break the system and get the data. Sure, there are smart things we can do to protect ourselves. Just like we wear seatbelts and lock our front doors, we should also use SSL and multi-factor authentication, but we can’t count on technology to keep our secrets. We need solutions that work even when the secret is out.

First, the data is pretty much already out there. The issue isn’t “How do we keep data from bad people,” it’s “How do we keep people from doing bad things with data?” DRM and crypto and related technology as the sole means to prevent data leakage and data abuse are failures. Sooner or later, the bad guys break the system and get the data. Sure, there are smart things we can do to protect ourselves. Just like we wear seatbelts and lock our front doors, we should also use SSL and multi-factor authentication, but we can’t count on technology to keep our secrets. We need solutions that work even when the secret is out.

In fact, privacy isn’t about information we keep secret. It is about information we have revealed to someone else with expectation of discretion, e.g., when we tell our doctor about our sexual activities. It’s no longer a secret from the Doctor, but because it is private, we have rules that keep the information from being used inappropriately. Most of the time, with most doctors, it works. Those few who break those rules are dealt with through legal means, both civil and criminal, as well as social approbation. So, because we inherently need to release data to different parties at different times, we can’t control it through secrecy alone. Instead, we need to build a framework for preventing abuse when others do have access to sensitive information. Like in the case with our doctor, we want our service providers to have the data they need to provide the highest quality services.

Data sharing is data copying

Second, in the world of atoms, there can only be one of a thing, which is the reverse of the world of bits. With atoms, even if there are copies, each copy is itself a singular thing. Selling, transferring, or stealing a thing precludes the original owner from continuing to use it.

Second, in the world of atoms, there can only be one of a thing, which is the reverse of the world of bits. With atoms, even if there are copies, each copy is itself a singular thing. Selling, transferring, or stealing a thing precludes the original owner from continuing to use it.

This isn’t true for information, which can easily be sold, transfered, and stolen without disturbing the original version. In fact, the entire Internet is basically a copy machine, copying IP packets from router to router, as we “send” images, web pages, and emails from user to user and machine to machine–each time a new copy is created whether or not the originating copy is deleted. To think of bits as if they were ownable property leads to attempted solutions like DRM that try to technologically prevent access to the information within the data, which is only good until the first hacker cracks the code and distributes it themselves. Instead, if we build social and legal controls on use, we can give information more freely, but under terms set by each individual when they share that information. Enforced by social and legal rather than purely technological means, this makes the most of the low marginal cost of distributing online, while retaining control for contributors.

Transaction data has dual ownership

Image via Wikipedia

Third, much interesting data is actually mutually owned… which means the other guy can already do whatever the heck they want with it. Consider web attention data, the stream of digital crumbs representing the websites we’ve visited and any interactions at each: all our purchases, all our blog posts, all our searches. Everything. Some folks argue that we own that data and therefore have the right to control the use of it. But so too do the owners of the websites we’ve been visiting. We don’t own our http log entries at Amazon. Amazon does. In fact, in every instance where two parties interact, where we engage in some transaction with someone else, both parties are co-creating that information. As such, both parties own it. So, if we tie the issue of control to ownership, then we’ve already lost the battle, because every service provider has solid claims to ownership over the information stored in their log files, just as we, as individuals, own the browsing history stored on our hard drive by Firefox, Internet Explorer and Chrome.

In the movie Fast Times at Ridgemont High, in a confrontation with Mr. Hand, Spicoli argues “If I’m here and you’re here, doesn’t that make it our time?” Just like the time shared between Spicoli and Mr. Hand, the information created by visiting a website is co-created and co-owned by both the visitor and the website. Every single interaction between two endpoints on the web generates at least two owners of the underlying data.

This is not a minor issue. The courts have already ruled that if an email is stored for any period of time on a server, the owner of that server has a right to read the email. So, when “my” email is out there at Gmail or AOL or on our company’s servers, know that it is also, legally, factually, and functionally, already their data.

Yours, mine, & ours: Reality is complicated

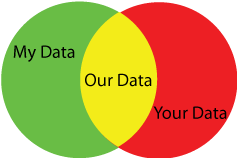

Fourth, when two parties come together for any reason, each brings their own data to the exchange. We need a framework that can handle that. Iain Henderson breaks down this complexity in a blog post about your data, my data, and our data, talking about an individual doing business with a vendor, for example, someone buying a car.

“My data” means data that I, as an individual have that is related to the transaction. It could include the kind of car I’m looking for, my budget, and estimates of my spouse’s requirements to approve of a new purchase.

“Your data” means data that the car dealer knows, including the actual cost of the vehicle, the number of units in inventory, the pace of sales, current buzz from other dealers.

“Our Data” means information that both parties have in common. That could be Shared Information, explicitly given by one party to the other in the course of the deal, such as a social security number so the dealer could run a credit check. It could be Mutual Information, generated by the very act of the transaction, such as the final sale price of the vehicle. Or, it could be Overlapping Information, which each party happens to know independently, such as the Manufacturer Suggested Retail Price (MSRP) of a vehicle (which we found online before heading to the dealership).

The ownership of “your” and “my” data is usually clear. However, ownership of the different types of “our” data is a challenge at best. To complicate matters further, every instance of “my data” is somebody else’s “your data”. In every case, there is this mutually reciprocal relationship between us and them. In the VRM case, we usually think of the individual as owning “my data” and the vendor as owning “your data”, but for the vendor, the reverse is true: to them their data is “my data” and the individual’s data is “your data”. Similar dynamics occur when the other party is an individual. I bring my data, you bring your data, and together we’ll engage with “our” data. We need an approach that respects and applies to everyone’s data, you, me, them, everybody.

In these complex Venn diagrams of ownership, it is more important who controls the data than who owns it. We’ve already lost the crudest form of control–secrecy–and we are going to continue to lose more as we opt-in to seductive new services based on divulging more and more information: our purchase history, browsing activity, and real-world location data. But we still need to control how all this data is used, to protect our own interests while still enjoying the benefits of the great big copy machine that is the Internet.

Taking back ownership is confrontational

© Regien Paassen | Dreamstime.com

Fifth, we don’t need to pick a fight to change the game. There is a lot of data out there that many of us believe we should have control over. I agree. A lot of people argue that we should have the right to exclude other people’s use because we own the data, because it’s ours in some legal, moral, or ethical framework. The problem is, those other people already have it, and they also believe that they are legitimate owners. In fact, many of them paid for that data, buying it from data aggregators who compile all sorts of things about people, from both public and private sources. This entire ecosystem of customer data is a multi-billion dollar business and every single player “owns” the data they are working with. So if we focus our energy in claiming ownership over that same data in order to take control, we are framing the conversation as a fight, a fight against a powerful, well-healed, well-funded, entrenched bunch of opponents.

Most of these “opponents” are the very people we are trying to win over to our way of thinking. These are the vendors we want to embrace a new way to do business. These are the technologists we want to transform their proven, value-generating CRM systems to work with our data on our terms, instead of their data on their terms. Arguing over ownership puts these potential allies on the defensive, when what we really want is their collaboration.

From Ownership to Authority, Rights, and Responsibilities

Rather than building a regime based on data ownership, I believe we would be better served by building one based on authority, rights, and responsibilities. That is, based on Information Sharing.

- Who has the authority to control access and use of particular information?

- What rights does a party have in using and distributing a piece of information?

- What responsibilities does an information user have to others with respect to that information?

Let’s stop arguing about who owns what and start figuring out how we can share information in ways that allow everyone to win.

When we collect all of our information into a single conceptual repository, and then share access to it with service providers on our own terms, we create a high quality, highly relevant, curated personal data store. This allows us to bootstrap a control regime over all of our data in a way that creates new value for us and for our service providers. Now, instead of iTunes Genius or a Last.FM scrobbler only having access to our media use with their service, they can provide recommendations based on all the information stored in our personal audio data store. We get better recommendations and they get better data to drive their services. This personal data store is entirely under the authority of the user, sharing information with service providers according to specific rights and responsibilities.

The Information Sharing approach neatly sidesteps the complexities involved in privacy and dataportability issues of the information already known by service providers. These remain serious issues, worth addressing. Resolving them will require long term investment in the legal, regulatory, moral, and political systems that govern our society. Fortunately, sharing the information in our personal data store can begin almost immediately once we have working specifications.

This controlled sharing of information will dramatically increase our comfort level when revealing our intentions and interests. We would have control over the use–and would be able to prevent abuse–of that information, while making it easy for service providers to improve our lives in countless ways.

At the Information Sharing Work Group at the Kantara Initiative, Iain Henderson and I are leading a conversation to create a framework for sharing information with service providers, online and off. We are coordinating with folks involved in privacy and dataportability and distinguish our effort by focusing on new information, information created for the purposes of sharing with others to enable a better service experience. Our goal is to create the technical and legal framework for Information Sharing that both protects the individual and enables new services built on previously unshared and unsharable information. In short, we are setting aside the questions of data ownership and focusing on the means for individuals to control that magical, digital pixie dust we sprinkle across every website we visit.

Image by hegarty_david via Flickr

Because the fact is, we want to share information. We want Google to know what we are searching for. We want Orbitz to know where we want to fly. We want Cars.com to know the kind of car we are looking for.

We just don’t want that information to be abused. We don’t want to be spammed, telemarketed, and adverblasted to death. We don’t want companies stockpiling vast data warehouses of personal information outside of our control. We don’t want to be exploited by corporations leveraging asymmetric power to force us to divulge and relinquish control over our addresses, dates of birth, and the names of our friends and family.

What we want is to share our information, on our terms. We want to protect our interests and enable service providers to do truly amazing things for us and on our behalf. This is the promise of the digital age: fabulous new services, under the guidance and control of each of us, individually.

And that is precisely what Information Sharing work group at Kantara is enabling.

The work is a continuation of several years of collaboration with Doc Searls and others at ProjectVRM. We’re building on the principles and conversations of Vendor Relationship Management and User Driven Services to create an industry standard for a legal and technical solution to individually-driven Information Sharing.

Our work group, like all Kantara work groups, is open to all contributors–and non-contributing participants–at no cost. I invite everyone interested in helping create a user-driven world to join us.

It should be an exciting future.

This material is based upon work supported by the National Science Foundation under Award Number IIP-08488990. Any opinions, findings, and conclusions or recommendations expressed in this publication are those of the author and do not necessarily reflect the views of the National Science Foundation.

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=4ee7737b-99f9-4e12-ae1c-66a13bf911be)

Pingback: Equals Drummond » Blog Archive » Joe Andrieu Cuts the Gordian Data Ownership Knot

Well done, Joe – this is an excellent analysis – both thoughtful and thought-provoking.

I agree: wrangles over “ownership” are a red herring and reveal little or nothing of value. It is far more useful and constructive to ask questions like “what rights do I have over this piece of information about me?”

I look forward to working on this with you through Kantara…

Pingback: ProjectVRM Blog » VRM Mojo Working

Relatively good post, Joe, but flawed at the end. See my response at

http://vquill.com/2010/01/which-ox-are-you-goring.html

Dave,

I agree with your point that we need a way for vendors to reach us when we want to. But that isn’t necessarily advertising and it certainly isn’t SPAM.

I’d suggest that request-based communications can never be SPAM, as long as the sender is actually addressing my request with the frequency I wanted.

The problem with today’s model is that when I give out my contact information (which most websites want in one form or another), there isn’t a standard way for me to let potential vendors know that “I only want to hear about product X” and “it’s ok to call between 10AM and 12PM on any work day.”

The painful regulatory work-around for that was the national do-not-call list in the US.

Or “Send me product recall notices anytime and no more than one promotional offer per quarter”. The ad-hoc work around good vendors use is to have multiple opt-in check boxes for different types of outreach. But /none/ that I’ve seen allowed the recipient to specify (a) the type of content (other than picking from a list of vendor-chosen options) and (b) frequency of message .

But if our contact information were served from our personal datastore–making it always up-to-date–along with the rights and responsibilities of the recipient, then we could in fact let vendors know how they can politely reach out to us without it being SPAM. In fact, a use case we are working on at the User Managed Access Work Group at Kantara is designed to use the personal datastore as an always-on protected service end-point, so the only contact information you give out has built in controls on incoming access.

So, perhaps we aren’t that far off from what you’re suggesting.

Wow! Such passion! Such excitement! Such salesmanship! So much wool over so many eyes! Such a presentation demands some thoughtful consideration of the parts being waved away, covered up, ignored, and missed.

There is a profound gap between the information we ourselves share directly with some service provider (telling Google what we’re searching for) and what you’re willing to admit as “abuse.” I call the vast majority of that gap “abuse,” and furthermore I prefer that any questionable areas be decided in favor of privacy–not commerce, not sharing, not business, not anyone or anything but myself.

It sounds to me as if you’re intent on creating a world where I lose control of the vast gap–not because there are no knobs for me to turn, but because there are so many of them that I can’t possibly keep track of them all. I am profoundly opposed to that goal and approach.

I have no interest in enabling service providers to do truly amazing things that magically transfer my money to their wallet, or my life to the microscope slides of all their partners, or my information to the whims of their bankruptcy courts.

I have no desire to consolidate all my personal information into a single container, where bugs or oversights can reveal it to those I did not approve.

I am already beset and inundated and filled to overflowing with amazing things. I need some help cutting back, not empowering more.

Jack,

I hear you about information overload and too many switches, buzzers, and buttons.

However, today you have effectively NO control. When you post it to Gmail, twitter, your blog, or facebook, you are generally giving up most of your rights to control that data. When you submit a query at Orbitz or at eBay, there is virtually no ability for you to manage anything about the subsequent use of that information. At best, there is a Terms of Service (TOS) that describes what rights you have and have given up, but individual users have very little influence over the terms in each TOS.

Consider two regimes:

1. You provide information to service providers under an explicit Information Sharing agreement, on your terms, which in your case would heavily favor privacy.

2. You provide information to service providers, protected by legislative privacy controls about the use of your data.

Which do you think is more likely to happen sooner? Which do you think will end up offering privacy controls more suited to your preference?

Given the difficulty of the US Congress to get anything done and the ease with which big corporations can manipulate the congressional process to get the results they want, I’m definitely willing to bet that both of those questions will be better answered by the Information Sharing approach. At the same time, I continue to support strong legislative controls to both put teeth into user-provided terms of sharing, /and/ to establish strong privacy and portability controls independent of any TOS or Informatoin Sharing agreement (ISA). I just think the latter is much more complicated and much too slow to help us in the near term.

Btw, you have two assumptions underlying your closing comments, which need not be true. First, your “personal datastore” need not be a single honey pot centrally located. The data can be distributed in multiple places anywhere online: pictures at flicker, blog posts at blogger, status updates at Facebook, LinkedIn, and Twitter, and location updates at FourSquare. The personal datastore is the aggregate of all of these, all under your control. For ease of use, you may want to manage your permissions for these all through a single Relationship Manager, but that’s an overlay on top of the security of the individual services.

Second, there’s no reason to set up your use policies such that you are precluding a black list of prohibited behaviors and allowing all else. Instead, you can set up your policies to be a white list of acceptable behaviors. In fact, the white list approach is really the only means I’ve been working with in pushing forward the Information Sharing scenarios and a significant portion of the work ahead is finding the sweet spot of clearly articulated uses that support both individuals’ needs and those of the data requester.

The result of Information Sharing should be a serious reduction in the noise in your information flow, as you can fine tune what you want from vendors and potential vendors. I believe that only by providing more explicit controls for your incoming data stream can we hope to reduce information flow to a manageable rate. At some point, we need a way to proactively shape our incoming communications so that we get what we want, when we want it. And /that/ is going to mean sharing more information with those who are reaching out to us, whether that is to get us to buy products, vote for a candidate, or be alerted to current weather hazards in our area.

In short, we’ve already opened up the Pandora’s box of the digital age. Information is overwhelming us. The task ahead is to figure out how to get some control back, and frankly, I don’t see the government stepping in soon enough to be much use.

An interesting article until it gets to From Ownership to Authority, Rights, and Responsibilities

at which point it is seriously flawed.

The reason is that it only talks about the person “sharing access to it [their personal data] with service providers on our own terms”

This will never fly. This is because the author has immediately cut out the other party that has a stake in my personal data (e.g. the data issuer). Until we realise that there are multiple stakeholders who each have their own policies, and that the data store should evaluate all of these policies in an impartial manner, only then will the idea of the personal data store really fly. Until that time SPs will never release their copy of my personal data to my personal data store (e.g. my buying history, my credit rating etc.).

David,

If by data issuer you mean that host where my data is stored, i think you’ll find that there are many many services already opening their doors, using technologies like Facebook Connect and OAuth. Others, like Mint and Wesabe are simply asking for your credentials and logging in as you to screen scrape the data.

Most consumers may not be away of the rapid changes happening in data access, but all the active companies in this space want users to be able to weave a seamless experience online, no matter where the data is stored, no matter where it is presented. This mashup approach is the natural continuation of the victory of the open Internet verses the walled gardens of AOL and CompuServe. The fact is, everyone who hosts data wants to provide an API so that they can win the battle to become your default store for that particular datatype, see Flickr and Twitter and YouTube for excellent examples of this. In response, everyone who presents an interface for accessing information wants to be able to aggregate all of the sources of data you have, wherever they are.

As a case in point, and directly counter to your closing assertion that “SPs will never release … my buying history”, look at Beacon and Blippy and their sole purpose of exposing your buying history so that it can go from one datastore (the point of purchase) to others (Facebook, Twitter). Beacon failed, but not because SPs weren’t willing to give out the data. They failed because users were freaked out. Blippy is doing essentially the same thing, but with a much better framing for users who want to opt-in to their model.

Thanks for the comment, but I have to say, I think the smart service providers are already opening up and connecting as fast as they can get the code written to support the latest specifications.

Thanks for your reply. I realise that my use of the word “release” was ambiguous. I did not mean allow someone to take a copy (which you implied, and which I agree may well happen), rather I meant give away and no longer hold a copy of the data,and instead rely on my personal data store to be the holder of the master copy. This is what I am asserting will never fly.

regards

David

David,

Well, we don’t really need them to give up their existing copy for us to make use of it elsewhere, with other vendors. Eventually, I believe we will be able to wean them from their insistence on stockpiling data, just as OpenID, Information Cards, and user-centric identity is starting to wean websites from managing usernames & passwords–something that met with similar skepticism when it was introduced.

There will remain data that companies are going to need to keep no matter what.

I believe that access to the information stored in the personal datastore will prove far more valuable than most of what is currently hoarded by corporate IS departments. Once that transition starts to happen, companies will pull budgets from overzealous IS and streamline and minimize the actual data they do keep. Seriously, the maintenance cost on good data is non-trivial. Companies will eventually be forced to clean house and harbor only the data they really need to compete effectively.

So, for now, why fight over the data they aren’t going to give up yet anyway? Let’s start by transitioning to high quality, volunteered information under specific terms. That will lay the groundwork for the eventual restoration of balance in the relationship, at least as far as the data is concerned.

The key challenge of this initiative would seem to be the “control” part. How exactly can I maintain control over the use of information I choose to share with service providers? Because once I’ve shared this information, I no longer can physically prevent others from making use of it in any way they desire. Even if I could clearly articulate a set of conditions under which these service providers would be allowed to make use of my information, there is the question of enforcement. Most likely, my willingness to share information will come with some sort of legal contract that obligates the service provider to treat my information in a manner that I specify. But how can I ensure that the service provider will honor this contract?

One possibility is that I might only be willing to share my information with service providers that I “trust”, based on some sense of their reputation or even some certification they may have for being trustworthy. In this case, I would be relying on the service providers to be a “good guys” and play by the rules….because of the trust I have in them.

On the other hand, I may know nothing about the service provider, but might be willing to share information anyway if a legal contract is in place that obligates the service provider to honor the constraints I place upon the use of my information.

But contracts and trust can still be broken. When it comes right down to it, my ability to control the use of my shared information seems to depend upon the threat of a lawsuit….either the service provider treats my information as I specify, or they get sued!

But for individuals choosing to share their information, the use of a lawsuit as the ultimate enforcer of control is problematic. Filing lawsuits is costly and time consuming, especially for individuals who do not have a stable of lawyers at their disposal. A large service provider that misuses the shared information would be better able to handle the burdens of a lawsuit than would the individual seeking to enforce the constraints that he/she placed on the shared information.

So this is the real challenge, I think. Can a new legal framework be devised that would allow individuals to enforce their rights to share information on their own terms, without the burdens that current legal systems impose on those seeking to enforce other types of contractual obligations? What would such a framework look like? Or will individuals still need to ultimately rely on the threat of a lawsuit to enforce their rights over shared information?

Bob,

I think the only meaningful controls must be both social and legal, and enforceable through criminal and civil courts as well as the “court” of public opinion.

I like the way Bill Washburn puts it. His grandmother used to say “I can’t make you do anything, but I can make you wish you had.”

The shadow of the future is how our society prevents and manages anti-social behavior: the risk that you will be caught and face social, legal, and even physical punishment. For many, the mere threat of social ostracization is enough. For other cases, even facing the death penalty is not enough to change behavior. We don’t prevent people from driving cars because they might run a red light and kill someone. We don’t prevent strangers from entering a bank because they might rob it. And we don’t require controlled containers for gasoline to prevent people from making Molotov cocktails. No, we “control” these things through the shadow of the future.

So, you’re right, once the data is out there, there’s nothing we can do to literally prevent abuse–not any more than we can “control” other kinds of crime in our society. But we can make them wish they hadn’t.

I think the trick is enabling enforcement at scale, so that it can be done cost-effectively. Proactive data labeling, explicit contract exchange prior to data release, and even automated audits (although I worry about the burden of the audit overhead for many use cases). It’s gotta be simple, fast, and effective. In fact, the economics of enforcement have got to be better than the economics of exploitation. That’s been the real problem with most data-related crimes. It’s so cheap to hack & copy and so hard to track down and punish. I’m not sure we have a good answer to thwarting determined criminals, but I do think we have an answer for the vast majority of companies that want to work with clean data, whose reputation depends on above-board behavior. The social approbation of screwing over your customers–in both revenue and stock price–just isn’t worth it to squeeze a few more pennies out.

Very interesting article Joe. I have written a few articles on http://wordpress.3dn.nl/category/identity-management and am curious if you find your ideas are similar to mine.

Unfortunately the textarea to leave my comments here is uhm… 8 characters wide so I’m going to keep it short for now. Hoping to hear back from you though.

Fred,

We’re definitely playing in the same ballpark. I sent you an email to follow up.

Btw, you can expand the comment field by dragging the lower right corner.

Pingback: Gorilla in the Room

Pingback: Good discussion, flawed analysis

Pingback: Asymmetry by Choice

Pingback: Doc Searls Weblog · Beyond caveat emptor

As you know, I am a troublemaker… I have one word for you

“sensors”

they are trembling at the edge of becoming extremely ubiquitous.

Very very insightful stuff.

I’m working on a couple of ventures which basically assume that evil enterprises will do what they will with your data unless you pay them. There are enterprises which we use that we trust already. If my mobile provider sold on my number and location I’d leave and because I pay them they don’t. There are lots of enterprises which already handle our information responsibly, it is just that the internet seems to think that it is above a contractual duty of care. I think this will change. Free services is one way, but what about all those “private people” who want to share? Ah the money.

Asymmetrical Value

The challenge is that your information means more to you personally than it does to them collectively. Does Flickr really care if you lose your photos? No, they care if lots of people lose photos (and their source of value).

Love the “Sharing is Copying” theme. You should here the database people in design discussions when I say “it’s the same information, but it’s in two tables” – they freak out.

Pingback: Capabilities of Computing Technology | Jennifer Sirias